News Release

GIGALIGHT Reaches Bulk Deployment of 400G/800G Silicon Optical Modules Leveraging Custom COP TM Process Platform

View More

8-Channel Optics Technology

GIGALIGHT’s Years of 8-Channel Optics Mastery Elevating 800G SR8/DR8 Performance

Learn MoreProducts & Solutions

GIGALIGHT focuses on developing decoupled optical network modules and subsystems to reduce capital expenditure(CAPEX) and operating expenditure(OPEX) for data centers and telecom operators. Since its establishment, the company has actively cooperated with global operators to realize the interconnection of optical networks, and has been widely recognized as a veritable advocate and leader of open optical interconnection middleware.

Data Center & Cloud Computing

- Immersion Liquid Cooling

- Silicon Photonics

- III-V Transceivers

- High Speed Interconnect Cable

- Optical Network Adapter

5G & Metro Network

- 5G Fronthaul Transceivers

- 5G Fronthaul HAOC

- 5G OMUX & Metro WDM Passive

- 5G Mid/Backhaul Transceivers

- ETHERNET/SDH/OTN Transceivers

Open DWDM Network

- COLOR ZR+ DWDM Module

- COLOR ZR+ DWDM Subsystem

- Coherent Optical Module

- Coherent DCI BOX & Cards

- Passive AAWG DWDM

Need Help?

If the customer service is not online, please leave us a message, we will arrange professional sales or technical personnel to contact you within 24 hours.

News & Events

Press Releases, Upcoming Events, Industry Insights and Marketing Reports

3 Case Studies that prove Liquid Cooling is the best solution for Next-Generation Data Centers

In the relentless pursuit of efficiency, scalability, and sustainability, the data center industry finds itself at the precipice of a groundbreaking transformation.This article thrilled to highlight a game-changing innovation that is poised to reshape the landscape of data center infrastructure – liquid cooling. With the ever-increasing demands for computing power, the conventional methods of air cooling are reaching their limits, prompting a paradigm shift towards liquid cooling as the future of data centers.

Liquid cooling, once reserved for niche applications, is now emerging as a scalable and efficient solution that promises to unlock the full potential of modern data centers. By dissipating heat more effectively and enabling higher-density deployments, liquid cooling addresses the escalating power densities of advanced computing equipment and positions data centers to handle the demands of emerging technologies like artificial intelligence, cloud computing, and edge computing.

In this article, we will delve into the transformative capabilities of liquid cooling and explore the numerous benefits it offers over traditional air cooling methods. From energy efficiency and reduced operational costs to improved performance and environmental sustainability, liquid cooling is leading the charge in revolutionizing data center technology.

What is Liquid Cooling?

Liquid cooling, specifically immersion cooling, is an advanced method utilized by data centers to efficiently dissipate heat from IT hardware. In immersion cooling, the IT hardware, including processors, GPUs, and other components, is submerged directly into a non-conductive liquid. This liquid acts as an effective coolant, absorbing the heat generated by the hardware during its operation.

The key principle behind liquid cooling is direct and efficient heat transfer. As the IT hardware operates, it produces significant amounts of heat due to high-performance computing tasks, such as Artificial Intelligence, Automation, and Machine Learning. To maintain optimal performance and prevent overheating, the generated heat must be dissipated effectively.

In immersion cooling, the liquid coolant surrounds the hardware components, allowing for direct contact with the heat sources. As a result, the heat is rapidly and efficiently transferred from the components to the liquid coolant, ensuring effective cooling of the hardware.

One of the most significant advantages of liquid cooling, especially immersion cooling, is its unparalleled efficiency and scalability. By directly immersing the hardware in the coolant, immersion cooling can handle substantially higher heat densities compared to traditional air-based cooling systems. This capability makes it an ideal choice for data centers with demanding workloads and high power densities.

Furthermore, immersion cooling offers virtually unlimited capacity for data centers. As data center demands continue to increase, and high-performance computing trends evolve, such as AI, Automation, and Machine Learning, immersion cooling proves to be a future-proofing solution. It can sustain the power requirements and efficiently manage the rising heat production, ensuring data centers remain flexible and adaptable to emerging technologies.

Case studies

Case Study 1: Lawrence Livermore National Laboratory (LLNL)

Industry: Research and High-Performance Computing

Challenge: LLNL operates some of the world’s most powerful supercomputers for scientific research, including climate modeling, nuclear simulations, and astrophysics. These supercomputers generate immense heat, requiring an efficient cooling solution to maintain peak performance.

Solution: LLNL adopted an immersion cooling solution for its HPC systems, submerging the server racks directly in a non-conductive cooling fluid.

Benefits:

Improved Cooling Efficiency: Liquid cooling effectively transferred heat away from critical components, enabling LLNL to run their supercomputers at higher power densities while maintaining optimal performance.

Energy Savings: The increased cooling efficiency allowed LLNL to reduce the power consumption required for cooling, resulting in significant energy savings.

Footprint Reduction: Liquid cooling eliminated the need for traditional air cooling infrastructure, reducing the physical footprint of the data center and enabling more efficient space utilization.

Noise Reduction: The absence of cooling fans and reduced airflow resulted in a quieter and more pleasant working environment.

Case Study 2: Advania Data Centers

Industry: Data Hosting and Cloud Services

Challenge: Advania Data Centers faced the challenge of efficiently cooling high-density server racks for their cloud services and data hosting operations.

Solution: Advania Data Centers adopted an innovative two-phase liquid cooling solution to address the demanding cooling requirements.

Benefits:

Scalability: The liquid cooling system allowed Advania to deploy high-density server racks without compromising on cooling capacity, enabling efficient resource utilization.

Sustainable Operations: The liquid cooling system reduced the data center’s overall energy consumption, contributing to lower carbon emissions and aligning with their sustainability initiatives.

High Performance: With efficient cooling, Advania Data Centers achieved consistent and reliable performance for their cloud services, ensuring excellent service levels for their customers.

Cost Savings: The reduced energy consumption and improved performance led to significant cost savings for Advania Data Centers over time.

Case Study 3: Tech Data Advanced Solutions

Industry: High-Performance Computing Solutions

Challenge: Tech Data Advanced Solutions needed a cooling solution to support their high-performance computing clusters used for artificial intelligence and data analytics.

Solution: Tech Data Advanced Solutions deployed a liquid cooling system that utilized direct-to-chip cooling technology.

Benefits:

Overclocking Capabilities: Liquid cooling enabled Tech Data to overclock their CPUs, achieving higher processing speeds and maximizing computing performance.

Reduced Maintenance: Liquid cooling minimized dust buildup and prevented air circulation issues, reducing the need for frequent maintenance and downtime.

Enhanced Performance: With improved cooling efficiency, Tech Data experienced higher CPU performance and optimized computing power for their HPC clusters.

Future-Proofing: The liquid cooling solution positioned Tech Data to handle the heat requirements of future hardware upgrades and emerging technologies.

These case studies exemplify how liquid cooling delivers significant benefits to data centers across various industries. From increased cooling efficiency and energy savings to scalability, sustainability, and

improved performance, liquid cooling proves to be a game-changing technology that ensures data centers can meet the evolving demands of high-performance computing and remain at the forefront of innovation in the digital age.

The Future of Liquid Cooling

In conclusion, liquid cooling, specifically immersion cooling, is a game-changing technology that data centers are adopting to address the escalating demands of high-performance computing. By directly submerging IT hardware into a non-conductive liquid, liquid cooling enables efficient heat dissipation and supports the sustained performance of critical components. As the data center industry embraces cutting-edge trends and advances in AI, Automation, and Machine Learning, liquid cooling stands at the forefront, offering the highest level of efficiency and ensuring the future-proofing of data center infrastructure.

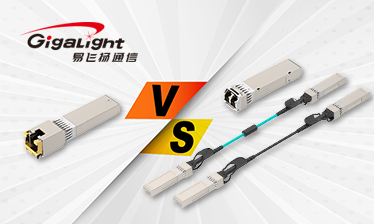

0 2Is A Copper Transceiver Or An Optical Transceiver, DAC, AOC Better?

The copper transceiver is a communication interface that through copper cables (such as twisted pair network cables). It is also a type of optical transceiver.

With the rapid development of communication technology, various transceivers have sprung up, among which copper transceivers, optical transceivers, DAC (Direct Attach Cable) and AOC (Active Optical Cable) have become hot choices in the industry. These transceivers have their own strengths and play important roles in different scenarios. To better meet communication needs, we need to deeply understand their characteristics and advantages in order to make wise choices in practical applications. Next, this article will focus on the comparison between copper transceivers, optical transceivers, DAC, and AOC, aiming to help readers better understand and choose suitable communication transceivers for themselves.

Copper Transceiver VS DAC

Both the copper transceiver and the DAC are suitable for short-distance transmission applications, and both are transmitted through copper cables. However, the transmission distance of the copper transceiver is larger than that of the DAC. The transmission distance of the DAC generally does not exceed 7m, while the copper transceiver can transmit 30m.

Copper Transceiver VS AOC

The copper transceiver uses copper cable for transmission, while the AOC uses optical cable. Therefore, the copper transceiver has a longer transmission distance, up to 300m. AOC has enhanced signal integrity and flexible deployment methods, and has better air flow and heat dissipation in the computer room cabling system, but its cost is higher than copper cabling at the 10G rate.

Copper Transceiver VS Optical transceiver

Compared with optical transceivers, copper transceivers use copper cables for wiring, while optical transceivers require optical fibers for wiring. If the original wiring system uses copper cables, using copper transceivers can reuse the previous copper cable wiring resources to complete the 10G network deploy. In terms of transmission distance, the transmission distance of the copper transceivers is relatively short, and the transmission distance of the optical transceiver can be as high as 120km according to different combinations.

GIGALIGHT Copper Transceiver

100M Copper SFP, including 100M single rate and 10/100M adaptive rate, adopts RJ45 interface, and can transmit up to 100m with Cat5/5e network cable. There are two options: commercial grade and industrial grade.

1G Copper SFP, including 1G and 10/100/1000m adaptive rate, also uses RJ45 interface, can transmit 100m with Cat5/5e network cable, and the temperature range is commercial grade.

10G Copper SFP supports 10G rate transmission, uses RJ45 interface, and can transmit up to 30m with Cat6/6A network cable, and the temperature range is commercial grade.

From the latest perspective, copper transceivers are still widely used in Ethernet, especially for connections within data centers and local area networks. They are known for their reliability and cost-effectiveness, making them a popular choice for many organizations. However, as the demand for higher data rates and bandwidth continues to increase, some applications have turned to optical transceivers because of their ability to support higher data rates and longer transmission distances.

Overall, copper transceivers continue to play a vital role in network infrastructure, providing a stable and efficient way to transmit data over copper cables. Their versatility and compatibility with existing network setups make them an essential component in modern communication systems.

0 4GIGALIGHT Innovatively Launches Linear 400G DR4 CPO Silicon Optical Engine

Shenzhen, China, March. 25, 2024 – GIGALIGHT recently announced the launch of a 400G DR4 CPO silicon optical engine based on linear direct drive technology. This is a subset of Gigabyte’s innovative products for the next optical network era:

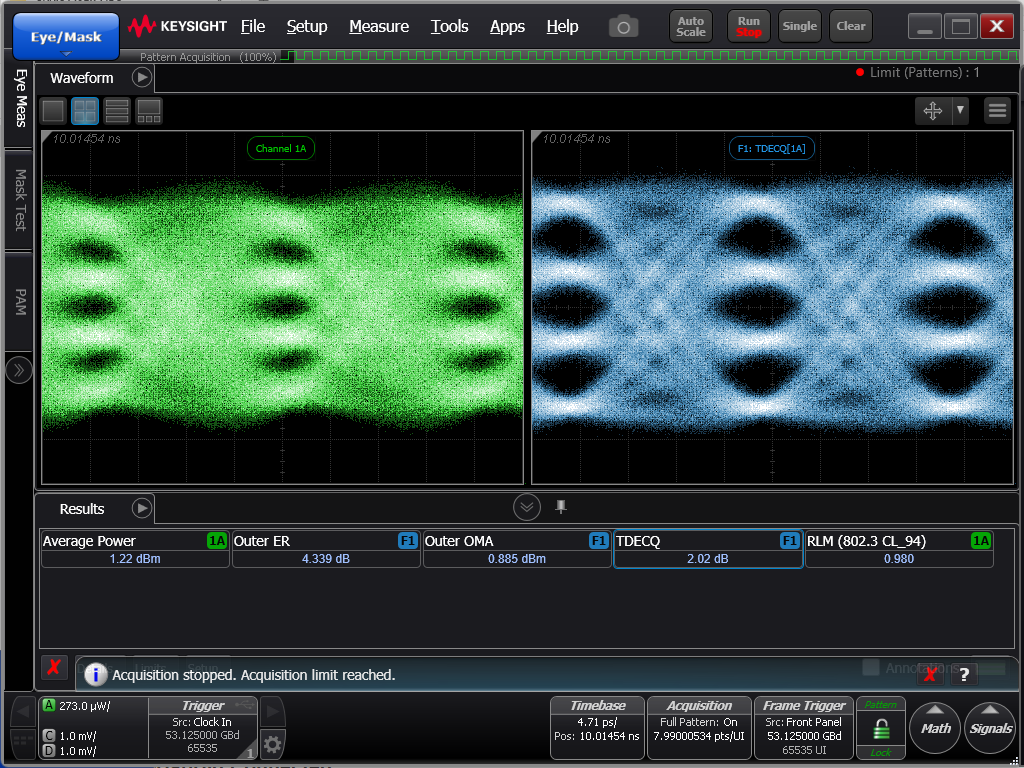

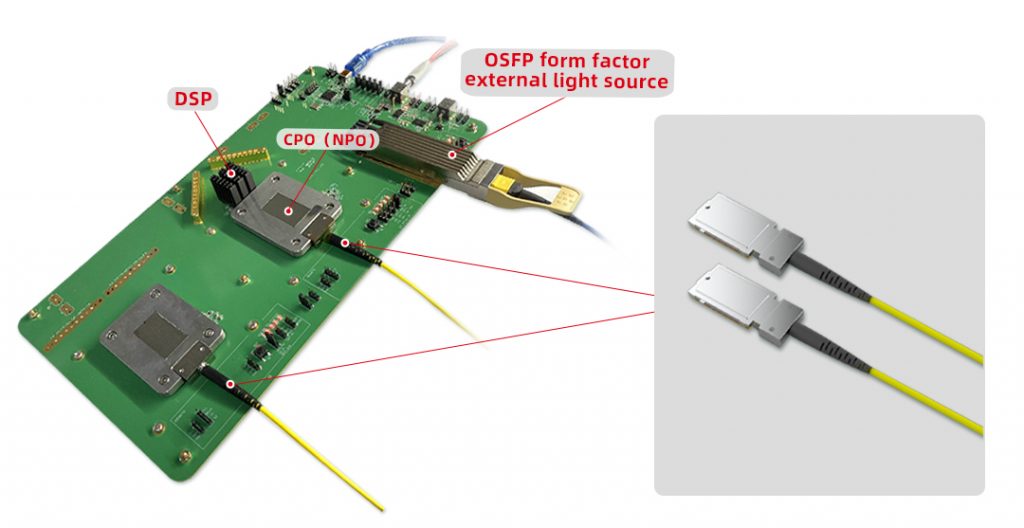

1.The linear silicon optical engine 400G DR4 CPO is composed of SOCKET slot packaging and LPO linear direct drive technology. Compared with the traditionally defined NPO/CPO, linear CPO silicon photonic engines remove DSP, which can significantly reduce system-level power consumption and costs. The light emitted from the TX end of this engine is in the range of 0-3dBm, and the extinction ratio falls in the range of 4-5dB. TDECQ<2.5dB, generally falls within 1-2dB. Wavelength is 1304.5nm-1317.5nm; SMSR≥30dB; RX end OMA sensitivity <-5.5dBm. The eye diagram is as follows:

2.Through the test board with DSP and external pluggable light source specially developed by GIGALIGHT, the linear 400G CPO optical engine can be completely restored.

3.GIGALIGHT plans to upgrade the engine to 800G and 1.6T through higher-density advanced packaging technology.

GIGALIGHT’s research on CPO originated from the Shenzhen Science and Technology Innovation Commission’s co-packaging CPO optical project undertaken by the company. Linear CPO is a relatively friendly technology form that can implement higher-speed and higher-density switches under the premise of limited low-nanometer manufacturing processes. Reduce power consumption and improve system interconnection reliability. Truly realize the goal of de-DSP for next-generation switches. Compared with the pluggable LPO technology that has fundamental flaws that the industry is currently working on, linear CPO is a stable technology. It should be said objectively that linear CPO is technically inspired by LPO and original CPO technology, mainly to more easily solve many problems such as system signal consistency and reliability. GIGALIGHT will delve into silicon optical linear CPO technology solutions.

About GIGALIGHT

As an open optical networking explorer, Gigalight integrates the design, manufacturing, and sales of both active and passive optical devices and subsystems. The company’s product portfolio includes optical modules, silicon photonics modules, liquid-cooled modules, passive optical components, active optical cables, direct attach copper cables, coherent optical communication modules, and OPEN DCI BOX subsystems. Gigalight focuses on serving applications such as data centers, 5G transport networks, metropolitan WDM transmission, ultra-HD broadcast and video, and more. It stands as an innovative designer of high-speed optical interconnect hardware solutions.

0 5Looking ahead to 2024 data center infrastructure

Recently, DeLL’ORO GROUP released a forecast report, reviewing the outstanding trends in the 23-year data center infrastructure report and looking forward to the development of data center infrastructure in 2024. The following is the report content.

Outstanding Trends Highlighted In 2023 Forecasts

- Data center capex growth in 2023 has decelerated noticeably, as projected after a surge of spending growth in 2022. The top 4 US cloud service providers (SPs) in aggregate slowed their capex significantly in 2023, with Amazon and Meta undergoing a digestion cycle, while Microsoft and Google are on track to increase their greenfield spending on accelerated computing deployments and data centers. The China Cloud SP market remains depressed as cloud demand remains soft from macroeconomic and regulatory headwinds, although there are signs of a turnaround in second-half 2023 from AI-related investments. The enterprise server and storage system market performed worse than expected, as most of the OEMs are on track to experience a double-digit decline in revenue growth in 2023 from a combination of inventory correction, and lower end-demand given the economic uncertainties. However, network and physical infrastructure OEMs have fared better in 2023 because strong backlog shipments fulfilled in the first half of 2023 which lifted revenue growth.

- We underestimated the impact of accelerated computing investments to enable AI applications in 2023. During that year, we saw a pronounced shift in spending from general-purpose computing to accelerated computing and complementary equipment for network and physical infrastructure. AI training models have become larger and more sophisticated, demanding the latest advances in accelerators such as GPUs and network connectivity. The high cost of AI-related infrastructure that was deployed helped to offset the sharp decline in the general-purpose computing market. However, supplies on accelerators have remained tight, given strong demand from new hyperscale.

- General-purpose computing has taken a backseat to accelerated computing in 2023, despite significant CPU refreshes from Intel and AMD with their fourth-generation processors. These new server platforms feature the latest in server interconnect technology, such as PCIe 5, DDR5, and more importantly CXL. CXL has the ability to aggregate memory usage across servers, improving overall utilization. However, general-purpose server demand has been soft, and the transition to the fourth-generation CPU platforms has been slower than expected (although AMD made significant progress in 3Q23). Furthermore, CXL adoption is limited to the hyperscale market, with limited use cases.

- Server connectivity is advancing faster than what we had expected a year ago. In particular, accelerated computing is on a speed transition cycle at least a generation ahead of the mainstream market. Currently, accelerated servers with NVIDIA H100 GPUs feature network adapters at up 400 Gbps with 112 Gbps SerDes, and bandwidth will double in the next generation of GPUs a year from now. Furthermore, Smart NIC adoption continues to gain adoption, though, mostly limited to the hyperscale market. According to our Ethernet Adapter and Smart NIC report, Smart NIC revenues increased by more than 50% in 2023.

- The edge computing market has been slow to materialize, and we reduced our forecast in the recent Telecom Server report, given that the ecosystem and more compelling use cases need to be developed, and that additional adopters beyond the early adopters have been limited.

According to our Data Center IT Capex report, we project data center capex to return to double-digit growth in 2024 as market conditions normalize. Accelerated computing will remain at the forefront of capex plans for the hyperscalers and enterprise market to enable AI-related and other domain specific workloads. Given the high cost of accelerated servers and their specialized networking and infrastructure requirements, the end-users will need to be more selective in their capex priorities. While deployments of general-purpose servers are expected to rebound in 2024, we believe greater emphasis will be made to increase server efficiency and utilization, while curtailing cost increases.

Below, we highlight key trends that can enhance the optimization of the overall server footprint and decrease the total cost of ownership for end-users:

Accelerated Computing Maintains Momentum

We estimate that 11% of the server unit shipments are accelerated in 2023, and are forecast to grow at a five-year compound annual growth rate approaching 30%. Accelerated Servers contain accelerators such as GPUs, FPGAs, or custom ASICs, and are more efficient than general-purpose servers when matched to domain-specific workloads. GPUs will likely remain as the primary choice for training large AI models, as well as running inference applications. While NVIDIA currently has a dominant share in the GPU market, we anticipate that other vendors such as AMD and Intel will gain some share over time as customers seek greater vendor diversity. Greater choices in the supply chain could translate to much-needed supply availability and cost reduction to enable sustainable growth of accelerated computing.

Advancements in Next-Generation Server Platform

General-purpose servers have been increasing in compute density, as evolution in the CPUs is enabling servers with more processor cores per CPU, memory, and bandwidth. Ampere Computing Altra Max, AMD’s Bergamo are offered with up to 128 cores per processor, and Intel’s Granite Rapids (available later this year), will have a similar number of cores per processor. Less than seven years ago, Intel’s Skylake CPUs were offered with a maximum of 28 cores. The latest generation of CPUs also contains onboard accelerators that are optimized for AI inference workloads.

Lengthening of the Server Replacement Cycle

The hyperscale cloud SPs have lengthened the replacement cycle of general-purpose servers. This measure has the impact of reducing the replacement cost of general-purpose servers over time, enabling more capex to be allocated to accelerated systems.

Disaggregation of Compute, Memory, and Storage

Compute and storage have been disaggregated in recent years to improve server and storage system utilization. We believe that next-generation rack-scale architectures based on CXL will enable a greater degree of disaggregation, benefiting the utilization of compute cores, memory, and storage.

forward from:Data Center Infrastructure—a Look into 2024, Baron Fung , Dell’Oro Group

0 6GIGALIGHT 400G QSFP-DD SR4, Exclusive Design To Help Data Center Interconnection

Moving towards the supercomputing era, data centers have ushered in rapid development. With the continuous evolution of technology, the rise of AI supercomputing is leading the wave of innovation in the digital field. In this era with data as the core, the demand for data centers is not only the expansion of scale, but also the urgent desire for high-speed transmission. The transmission rate of 400G/800G, just like a powerful digital trend, is redefining data centers infrastructure.

In this article, Gigalight introduces you to a brand new 400G product: 400G QSFP-DD SR4 optical module, and see how it is used in today’s data centers.

Existing 400G network solutions

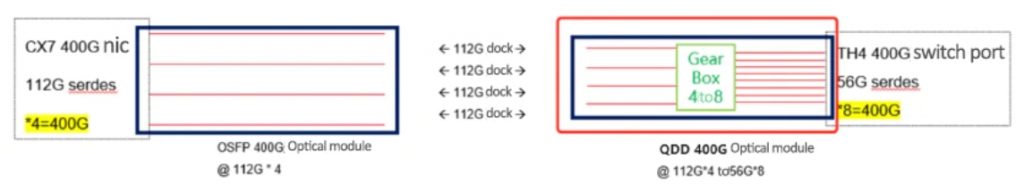

The high-speed network in the GPU cluster mainly consists of high-speed network cards, optical connectors (optical transceivers and cables) and high-speed switches. Currently, servers equipped with H800GPU mainly use NVIDIA CX7 400G network cards, and there are many choices of switches on the market. These options include 64400G QDD switches based on BCM 25.6T Tomahawk4 (TH4) chips, 64800G switches based on BCM 51.2T chips, switches based on Cisco Nexus chips, and 400G/800G switches based on NVIDIA Spectrum chips. From a market perspective, 400G QDD switches are currently the dominant choice. The following figure shows the commonly used network architecture of clusters:

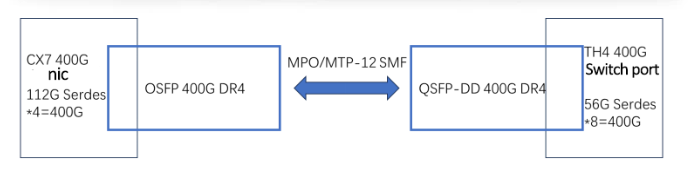

It can be observed that the mainstream 400G switches in the market use 56G SerDes, while the CX7 network card uses 112G SerDes. In order to keep the network connected, this difference needs to be considered when selecting optical transceivers to ensure component compatibility and proper operation. As shown in the picture:

In order to solve this problem, everyone currently chooses 400G QSFP-DD DR4 optical modules on the switch side, and uses 400G OSFP DR4 optical modules on the server side. The connection is shown below:

This is a good solution within a connection distance of less than 500m, but if the connection distance does not exceed 100m, choosing the DR4 solution is a bit wasteful. Therefore, the short-distance 400G QSFP-DD SR4 using low-cost 850nm VSCEL laser is a good choice. It is much cheaper than DR4 and can meet the transmission distance requirement of 100m.

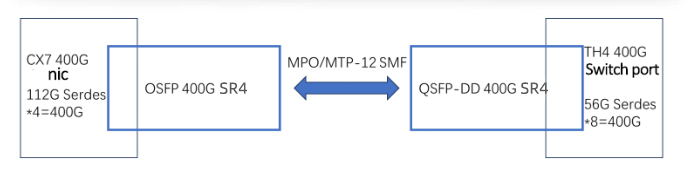

400G QSFP-DD SR4 is inserted into the 400G QSFP-DD 8×50G PAM4 port, which can be interconnected with the 400G OSFP (4×100G PAM4) network card, and the interconnection between 400G QSFP-DD SR4 and 400G OSFP SR4 can be realized. . If shown:

Gigalight’s newly launched 400G QSFP-DD SR4 electrical port uses 8×50G PAM4 modulation, and the optical port uses 4×100G PAM4 modulation. Compared with 400G QSFP-DD SR8, it reduces 4 lasers and achieves lower Power consumption and price, power consumption is less than 8W. Using MPO12 optical port, it can meet 60m transmission with OM3 multi-mode fiber, and 100m transmission with OM4 multi-mode fiber.

The release of Gigalight’s 400G QSFP-DD SR4 fills the gap in short-distance 400G data center interconnection solutions and provides a more comprehensive choice for your data center.

0 7AI data center architecture upgrade triggers surge in demand for 800G optical transceivers

The surge in demand for 800G optical transceivers directly reflects the increasing demand for artificial intelligence-driven applications. As the digital environment continues to evolve, the need for faster and more efficient data transmission becomes imperative. The deployment of 800G optical transceivers, coupled with the transition to a tier 2 leaf-spine architecture, reflects a strategic move to meet the needs of modern computing.

Why do we need 800G optical transceivers?

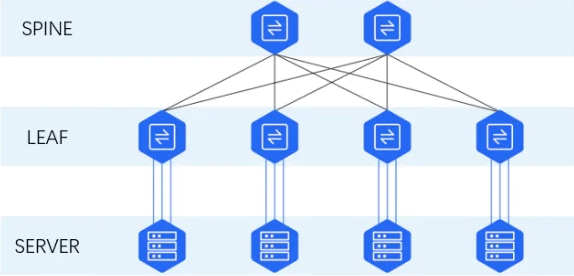

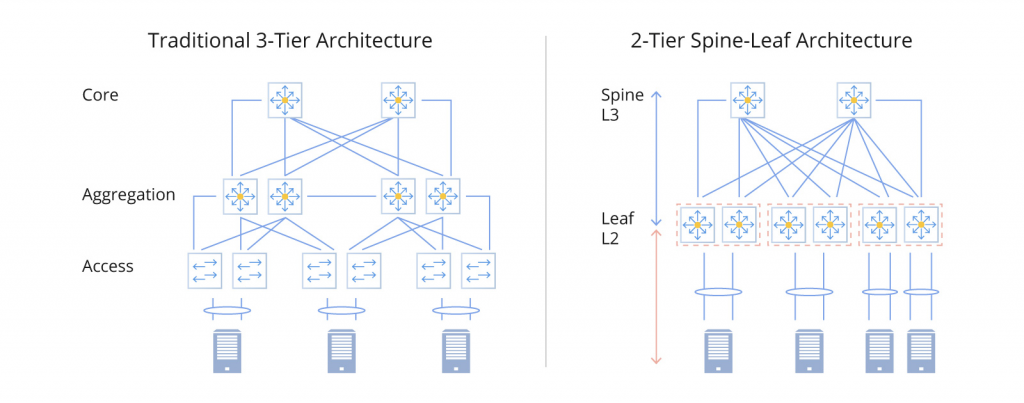

The surge in demand for 800G optical transceivers is closely related to changes in data center network architecture. The traditional three-tier architecture (including access tier, aggregation tier and core tier) has been the standard for many years. As the scale of AI technology and east-west traffic continue to expand, data center network architecture is also constantly evolving. In the traditional three-tier topology, data exchange between servers needs to go through access switches, aggregation switches and core switches, which puts huge pressure on aggregation switches and core switches.

If the server cluster scale continues to expand according to the traditional three-tier topology, high-performance equipment will need to be deployed at the core tier and aggregation tier, resulting in a significant increase in equipment costs. This is where the new Spine-Leaf topology comes into play, flattening the traditional three-tier topology into a two-tier architecture. The adoption of 800G optical transceivers has promoted the emergence of Spine-Leaf network architecture, which has many advantages such as high bandwidth utilization, excellent scalability, predictable network delay, and enhanced security.

In this architecture, leaf switches serve as access switches in the traditional three-tier architecture and are directly connected to servers. Spine switches act as core switches, but they are directly connected to Leaf switches, and each Spine switch needs to be connected to all Leaf switches.

The number of downlink ports on a Leaf switch determines the number of Leaf switches, and the number of uplink ports on a Leaf switch determines the number of Spine switches. Together, they determine the scale of the Spine-Leaf network.

The Leaf-Spine architecture significantly improves the efficiency of data transmission between servers. When the number of servers needs to be expanded, the scalability of the data center can be enhanced by simply increasing the number of Spine switches. The only drawback is that the Spine-Leaf architecture requires a large number of ports compared to the traditional three-tire topology. Therefore, servers and switches require more optical transceivers for fiber optic communications, stimulating the demand for 800G optical transceivers.

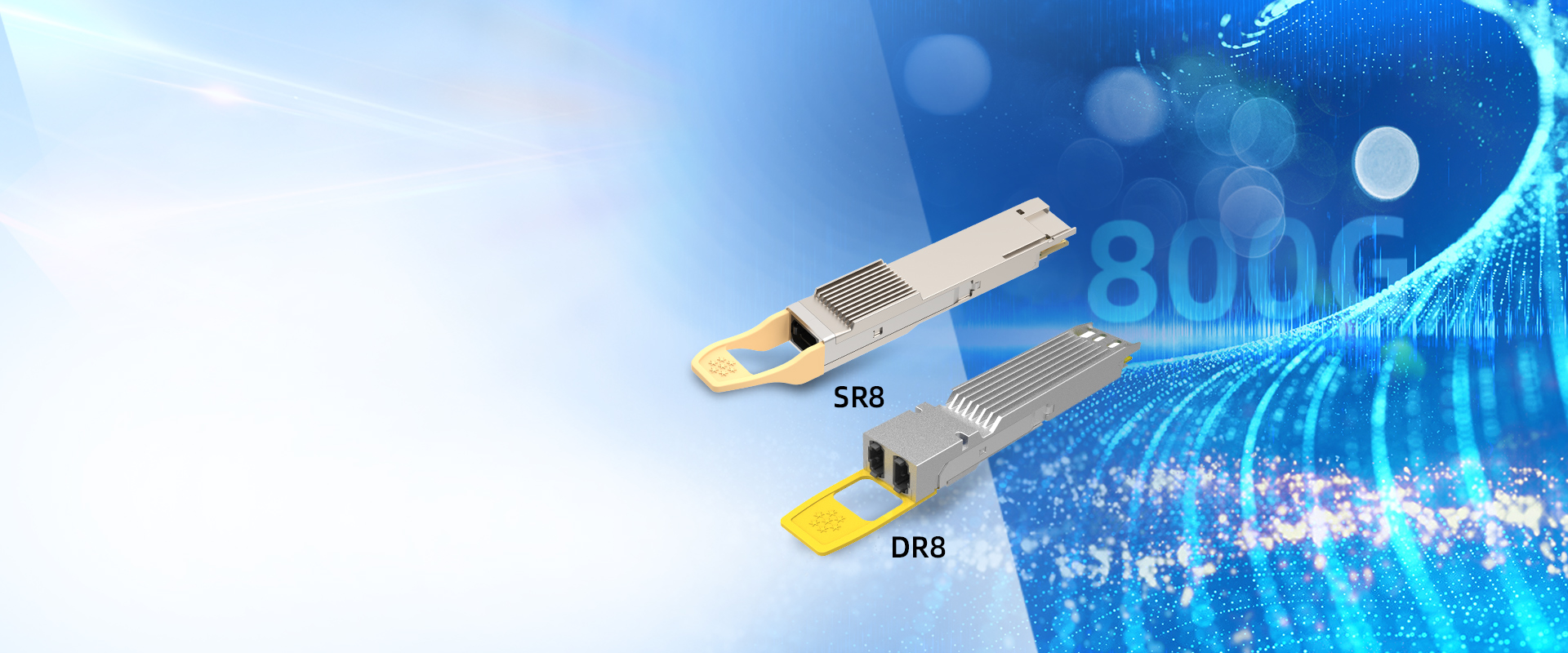

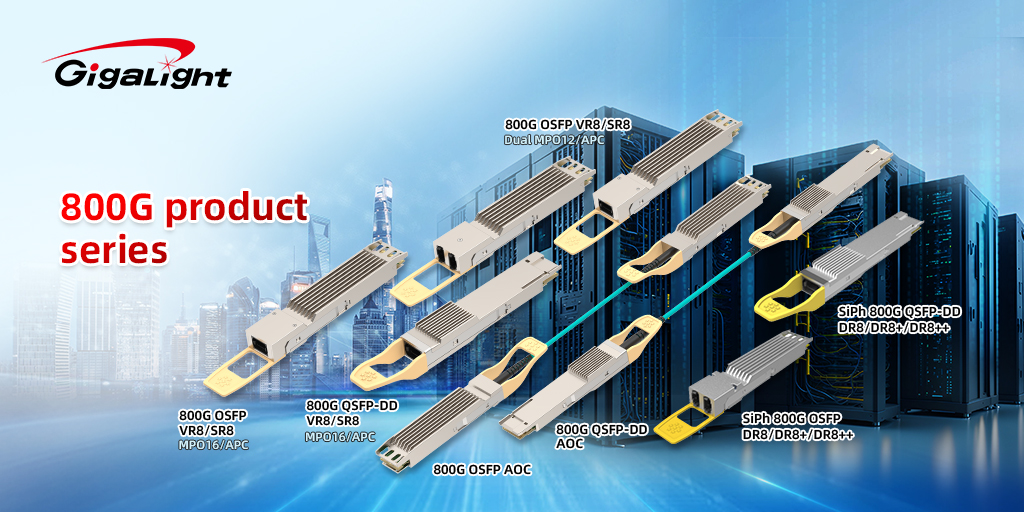

Introduction to GIGALIGHT 800G optical transceivers

In response to the surge in demand for 800G optical transceivers, Gigalight has successively launched short-distance 800G VR8/SR8 optical transceivers/AOCs based on VCSEL lasers and 800G DR8/DR8+/DR8++ optical transceivers based on silicon photonic technology. Gigalight’s silicon optical transceivers and multi-mode VCSEL optical transceivers constitute a complete interconnection solution for high-speed AI data centers.

The multi-mode series 800G VR8/SR8/AOC is equipped with high-performance 112Gbps VCSEL laser and 7nm DSP. The electrical host interface is 112Gbps PAM4 signal per channel and supports CMIS version 4.0 protocol. Using QSFP-DD, OSFP form factor, VR8 supports 30 meters (OM3 MMF) and 50 meters (OM4 MMF) transmission distance; SR8 supports 60 meters (OM3 MMF) and 100 meters (OM4 MMF) transmission distance, with MPO16 and 2×MPO12 Two interfaces are available, suitable for short-distance data center application scenarios.

Silicon optical transceivers focus on solving interconnection scenarios with links exceeding 100 meters. Gigalight silicon optical 800G DR8 supports QSFP-DD and OSFP two form factors. The DR8 transmission distance is 500m, DR8+ can transmit 2km, and DR8++ can transmit 10km. The transceivers uses four 1310nm CW lasers; the maximum power consumption is less than 18W. The currently released version supports two connector architectures: MPO16/APC and Dual MPO12/APC. Compared with the 800G optical transceiver of the conventional 8-channel EML solution, the silicon optical transceiver can use fewer lasers. For example, 800G DR8 can use one laser to achieve lower power consumption. This year, Gigalight will also launch 800G QSFP-DD PSM DWDM8 and 800G OSFP/QSFP-DD 2×FR4 optical transceivers based on silicon photonics, providing customers with more choices.

The adoption of 800G optical transceivers not only solves current challenges, but also provides forward-looking solutions to accommodate the expected growth in data processing and transmission. As technology advances, the synergy between artificial intelligence computing and high-speed optical communications will play a key role in shaping the future of information technology infrastructure.

0 8What Are the Differences Among Temperature Grades in Optical Modules?

When selecting optical modules, in addition to the most common commercial grade based on operating temperature, we also encounter options such as extended grade and industrial grade. Why are optical modules divided into so many temperature grades, and what are the differences among them?

Differences in Temperature Grades of Optical Modules:

- Chip Tolerance to Temperature:Commercial grade optical modules operate in the temperature range of 0℃ to 70℃.Extended grade operates in the temperature range of -20℃ to +85℃.Industrial grade optical modules have a wider temperature range of -40℃ to 85℃, matching their supported operating temperatures.

- Testing Methods:Commercial grade optical modules undergo normal temperature aging testing.Industrial grade optical modules, on the other hand, undergo high and low-temperature aging testing.

- Price Variation:Industrial grade optical modules incur additional material and manufacturing costs due to physical cooling and temperature compensation. Therefore, under the same parameters such as transmission rate and wavelength, industrial grade optical modules are generally more expensive than commercial grade optical modules.

- Application Scenarios:Commercial grade optical modules are the most common and widely used products in the market, typically applied in enterprise networks, data centers, and server rooms.Extended grade optical modules are designed for relatively harsh environments with significant temperature variations, such as remote mountainous areas and tunnels.Industrial grade optical modules, with even lower temperature tolerance than the extended grade, are often used in conjunction with industrial-grade Ethernet switches. They find applications in industrial Ethernet scenarios, including factory automation, railways, intelligent transportation systems (ITS), power facilities, marine, oil, natural gas, mining, and other industries. Industrial grade optical modules can ensure persistent and stable transmission in challenging working environments.

Exploring the Advantages of 200G (8x25G NRZ) Optical Technology in Data Centers

GIGALIGHT, which has focused on optical communication for eight years, directs your attention to the 200G (8x25G NRZ) technology, delving into its advantages such as low power consumption, low latency, and easy deployment. This provides a deeper understanding of cost-effective optical interconnection solutions within data centers.

There are two technological directions for 200G optical modules: one utilizes the QSFP-DD packaging for an economical 8x25G NRZ network, while the other employs QSFP56 packaging for a 4x50G PAM4 network (to be discussed in a subsequent article).

Compared to PAM4 technology, 200G (8x25G NRZ) boasts advantages in low power consumption, low latency, and easy deployment.

Technical details:

QSFP-DD packaging for 8x25G NRZ network: The 200G optical module uses QSFP-DD packaging, transmitting 25G NRZ signals through 8 channels. NRZ, representing non-return-to-zero signals, involves signal switching between high and low levels. Compared to traditional PAM4 signals, NRZ signals are easier to modulate and demodulate.

Low power consumption: Utilizing 25G NRZ optical components, the module’s power consumption is reduced by 2–3W compared to modules based on PAM4 DSP CDR technology. This offers significant advantages for data center operational costs (OPEX) and cooling efficiency.

Low latency: 200G NRZ excels in special application scenarios with stringent network latency requirements, such as high-frequency trading networks. The transmission method of NRZ signals provides an advantage in terms of latency.

Deployment ease:

Mature industry chain: The technology of 25G NRZ optical chips and components is relatively mature, providing a reliable foundation for the production of 200G NRZ. This makes the development of related 200G switch ports and optical module products relatively easy, facilitating rapid commercial deployment.

Low-cost optical interconnection: Due to mature technology and industry chains, 200G NRZ achieves low-cost optical interconnection within data centers. This offers an economically effective solution for the construction and expansion of large-scale data centers.

200G QSFP-DD SR8

The 200G QSFP-DD SR8 is a multimode parallel product, utilizing an 8-channel 850nm VCSEL array, compliant with the 100GBASE-SR4 protocol standard. Leveraging the advantages of traditional VCSEL platforms, GIGALIGHT has adopted a simple, efficient, and reliable fiber coupling process. By introducing a 45° prism between the laser and the fiber, along with special material treatment on the fiber surface, the fiber coupling efficiency has been increased to over 80%.

200G QSFP-DD PSM8

The 200G QSFP-DD PSM8 is a high-speed product utilizing single-mode parallel technology. In comparison to wavelength division multiplexing (WDM) technology, PSM technology offers cost advantages. GIGALIGHT PSM optical module enables error-free long-distance transmission up to 10km, five times the distance achieved by traditional PSM optical modules.

200G QSFP-DD LR8

The 200G QSFP-DD LR8 optical module is based on an 8-channel LAN-WDM wavelength division multiplexing design, achieving a 1E-12 bit error rate over a transmission distance of 20km. In the context of high-reliability 5G middle-backhaul transmission systems, the long-term transmission of PAM4 signals may pose challenges such as insufficient bandwidth. The 200G QSFP-DD LR8, however, offers a high-performance, low-latency, and highly reliable channel solution.

GIGALIGHT has recently made significant strides in optical design, particularly achieving notable success in the development of 800G optical modules. The design includes the completion of 800G SR8/DR8. For more details, please refer to here. Moreover, GIGALIGHT has successfully mass-produced and deployed 8-channel optical products in niche markets globally, encompassing various fields such as 200G, 400G, and 800G. Visit our official website for further information.

10